Kellyanne Conway, Counselor to the President, on “Meet the Press” suggested that the Trump administration will rely on alternative facts when objective reporting does not suit their needs. Clearly, this is nothing new for most politicians, the pharmaceutical industry, tobacco companies, weight loss plans, and advertising in general. On the other hand, I wonder if alternative facts are so bad?

First, we need to define “facts”. Webster defines a fact as “the quality of being actual; something that has actual existence; an actual occurrence, or information presented as having objective reality.” Alternative is defined as “offering or expressing a choice; differing from the usual or conventional; existing or functioning outside established cultural, social, or economic systems.

Without getting into a discussion of philosophy let’s take an area of inquiry that deals in facts, and that is science. Science seeks to explain how and why natural phenomena work (i.e. nuclear fusion, embryogenesis, neural transmission in the mammalian brain, cancer, animal and human behavior). To “do science” one must employ the scientific method and while I am not going to review the scientific method, there are a couple of points worth considering.

First, scientific explanations are based on observable, reproducible data. Only when an explanation is replicated can we talk about a scientific fact. Now here is the tricky part. If the essence of science is replication and replication requires duplicating a set of actions and repeatedly obtaining the same outcome, then how can we ever expect that can make decisions based on facts in the real world? At best, it possible only under laboratory conditions. When it comes to the world outside the laboratory the task is a bit more difficult.

Here is where we get into alternative facts, from the perspective of science. Even though scientists may specify the conditions under which the experiment or observations were conducted, exact replication is difficult. Does that mean we cannot develop scientific facts, remember the definition, outside the laboratory? Unequivocally no! Different scientists collect observations, data, on a phenomenon of interest. They all have scientific data, facts, based on the reality of their observations or experiments. One might say that they have alternative facts, but since exact replication is not possible, are facts from one scientist “more factual” than those from another?

As you might expect all scientific data are not created equal. It is well-established that some scientists are more careful, more precise, or are better observers of detail. So, at some point we are confronted with “alternative facts,” but the goal of science is explanation. So how do were sort out the alternative facts to find those most reliable, replicable, and consistent? We exclude hypotheses one at a time based on alternative facts (i.e. data) until we are left with one hypothesis that best explains our phenomena of interest. In this sense, there are alternative facts since differing hypotheses are all based on data, so how do we determine which facts are best? In science, we use Occam’s razor (law of parsimony) to determine which hypothesis is the best based on simplicity. The most parsimonious is preferable because it is the most easily testable. Replication of experiments or observations reveals the one hypothesis that produces the most consistent results and can be explained by the simplest hypothesis. Typically, conclusions from observations or experiments are evaluated as being consistent with a hypothesis or not. While I might not obtain the same results as another researcher, my results are consistent with a growing body of knowledge.

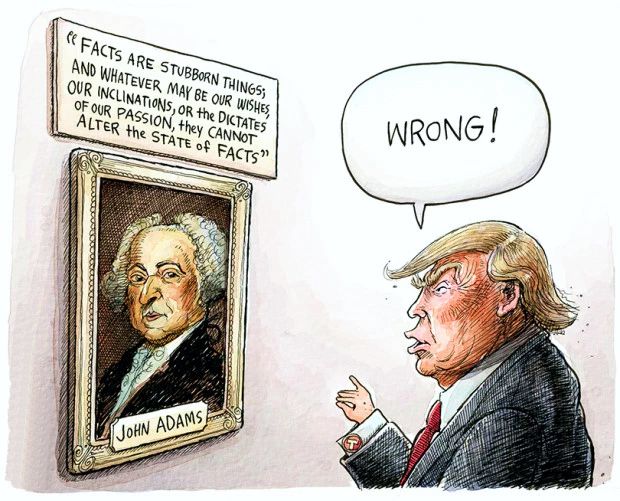

Now what does all this have to do with Kellyanne Conway’s “alternative facts” explanation of the contrasting views of the attendance figures for the recent inauguration. Shawn Spicer, President Trump’s Press Secretary, attempted to rebut the national news media’s estimate of the size of the crowd on the National Mall, asserting that the attendance was the largest of any inauguration. Estimating crowd size is a difficult task at best, but there are scientific techniques available 1. Without referencing sources, techniques employed, and statistical analyses used crowd size estimates have no more accuracy than simple opinions. It is equivalent to the oft heard phrase, authorities agree…, by those advocating their own ideas.

Claims about any issue remain nothing more than opinions if scientific methods are not employed. Given the climate of politics in the US right now, and the contentiousness that exists between the national new media and the White House, assessments of crowds, economic indicators, and the like should be specified in greater detail so that they may rise above individual opinion. If media outlets, including the White House, don’t take time to obtain the best possible data, read facts, any discussion will simply degenerate into reliance on “alternative facts” or opinions. As Huey P. Long said, “Opinions are like assholes. Everybody has one, and some smell more than others.

Sources

Botta, F., H.S. Moat, and T. Preis, 2015. Quantifying crowd size with mobile phone and Twitter data. Royal Society Open Science, 2(5): 150162.

Chan, A.B. and N. Vasconcelos, 2012. Counting People With Low-Level Features and Bayesian Regression. IEEE Transactions on Image Processing, 21(4): 2160-2177.

Craven Mcginty, J.O. and L. Schwartz, 2015. On counting crowd size—and its contentious history. Wall Street Journal, 266(92): A2 .

Hashemzadeh, M., G. Pan, and M. Yao, 2014. Counting moving people in crowds using motion statistics of feature-points. Multimedia Tools & Applications, 72(1): 453-487.

Tang, N.C., Y.-Y. Lin, M.-F. Weng, and H.-Y.M. Liao, 2015. Cross-camera knowledge transfer for multiview people counting. IEEE Transactions on Image Processing, 24(1): 80-93.

van Rijn, N., 2001. The ‘science’; of people counting, Toront0 Star. 29 July, p. A6.

EO Smith

Latest posts by EO Smith (see all)

- Patriotism - 4 July, 2017

- The Super Sucker Bowl - 10 February, 2017

- Alternative Facts and Science - 24 January, 2017